Introduction to the AI-Driven Plugin Revolution

In the sophisticated ecosystem of modern digital audio workstations, Ableton Live stands as a premier platform for both composition and live performance. Its extensibility through third-party plugins has always been a cornerstone of its success. At the foundation of this extensibility lies Steinberg’s Virtual Studio Technology (VST) standard, specifically the VSTSDK and its companion VSTGUI library. As artificial intelligence permeates every facet of creative technology, these tools are undergoing a profound transformation, enabling developers to craft plugins that transcend traditional signal processing into the realm of adaptive, learning, and generative systems.

This technical exploration examines how Steinberg VSTSDK and VSTGUI are being leveraged in the AI era to revolutionize Ableton plugins. We will dissect the architectural nuances of VST3, explore real-time integration of neural networks, analyze GUI design principles for complex AI parameters, address performance challenges inherent in low-latency audio environments, and project the trajectory of intelligent music production tools. Whether you are a plugin developer, audio DSP engineer, or forward-thinking producer, this article provides the technical depth required to navigate this evolving landscape.

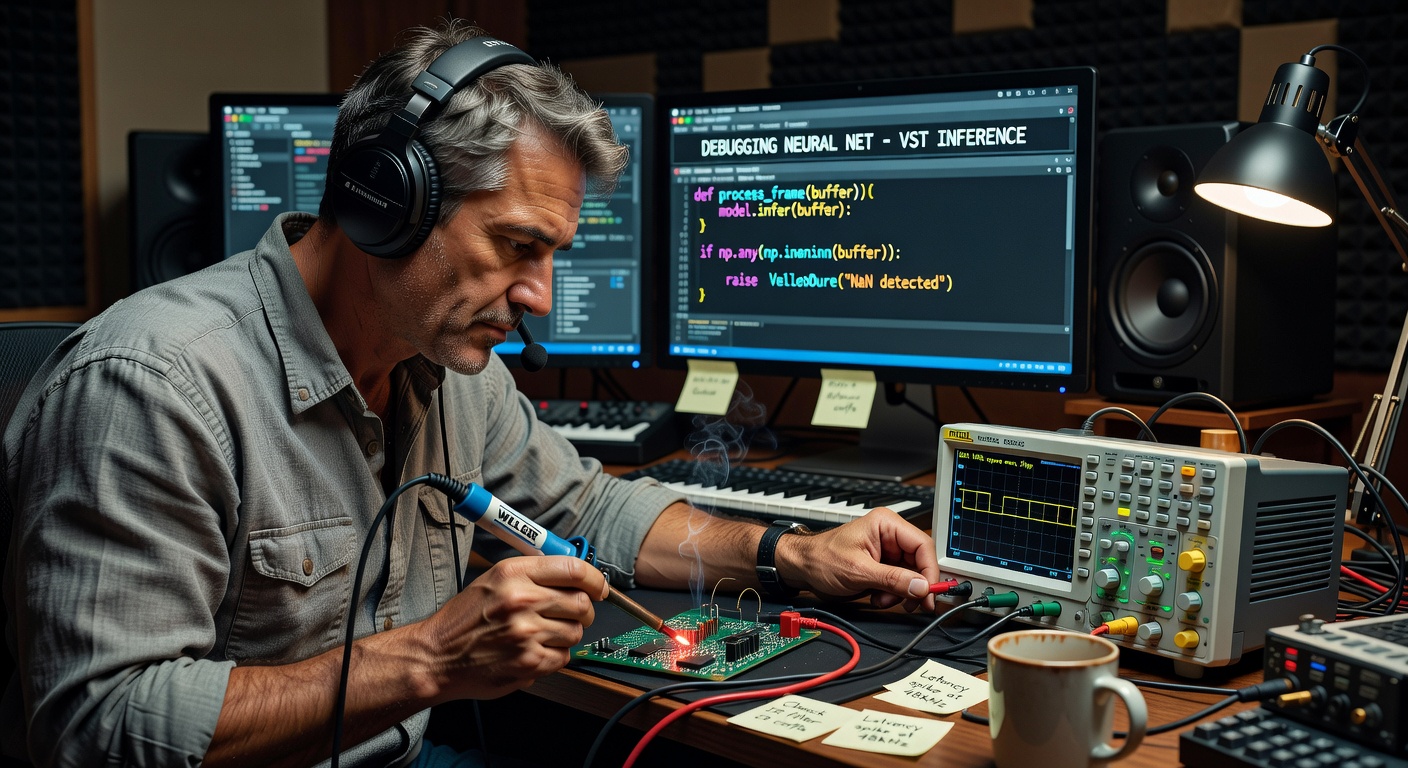

Image Grid 1

Understanding Steinberg VSTSDK: The Technical Foundation

The Steinberg VSTSDK represents far more than a simple API; it is a comprehensive development framework written primarily in C++ that facilitates the creation of cross-platform, professional-grade audio plugins. Since its initial release in 1996, the standard has evolved significantly. VST2, while ubiquitous for many years, has been superseded by VST3, which introduces numerous architectural improvements critical for modern AI implementations.

VST3 offers sample-accurate automation, improved side-chain handling, note expression capabilities, and a more efficient processing model through its processor and controller separation. The SDK provides developers with base classes such as Steinberg::Vst::SingleComponentEffect and Steinberg::Vst::EditorView, which form the scaffolding for both audio processing and user interface components.

When targeting Ableton Live, developers must ensure strict adherence to the VST3 specification, particularly regarding parameter management and state persistence. Ableton’s plugin scanner is notoriously strict, and improper implementation of the IComponent or IEditController interfaces can result in plugins failing to load or exhibiting unstable behavior during live performance.

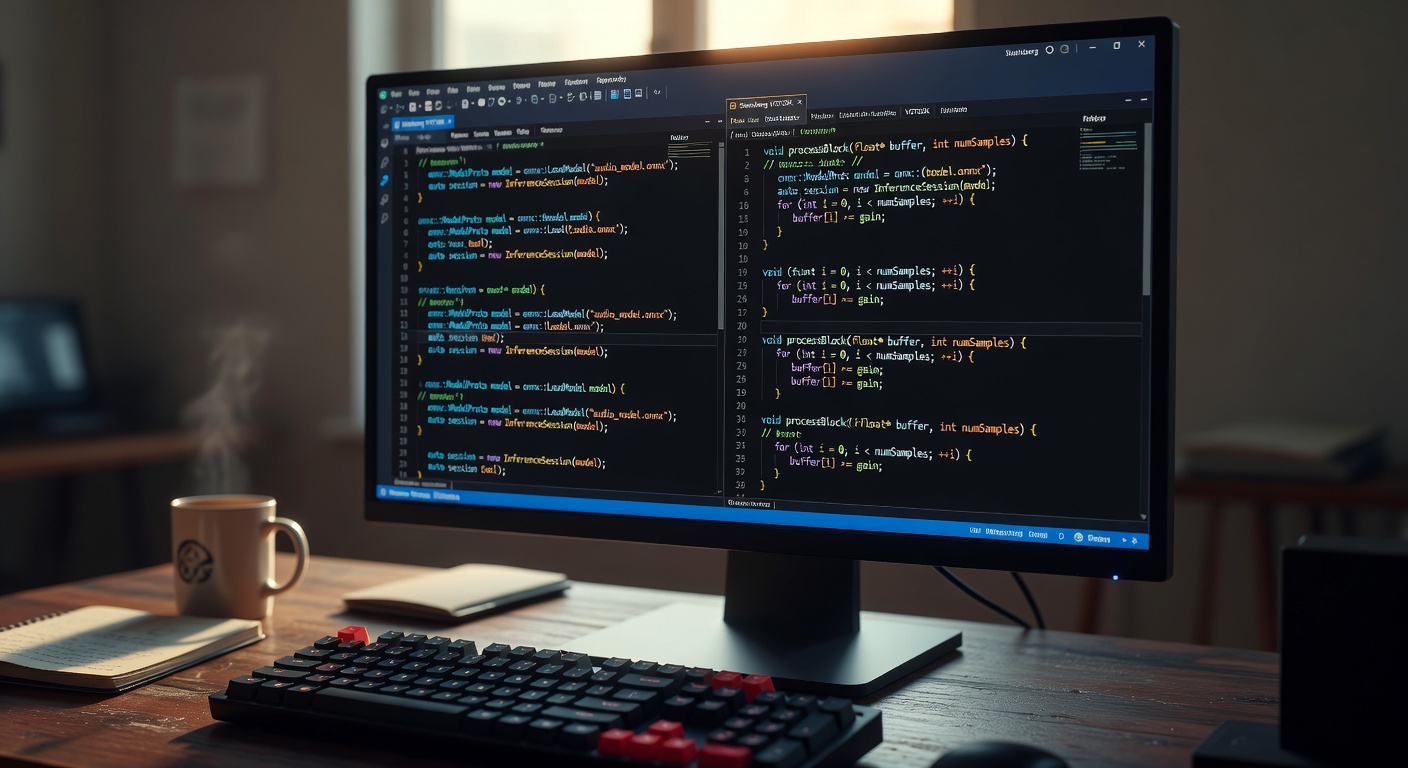

Image Grid 2

VSTGUI: Crafting Professional and Responsive Interfaces

VSTGUI is Steinberg’s C++ GUI library specifically designed for audio plugin development. It provides a vector-based, resolution-independent framework that ensures plugins maintain visual fidelity across different display densities and operating systems. In the context of AI-enhanced plugins, VSTGUI becomes even more critical as interfaces must accommodate complex parameters such as neural network weights, model selection, training feedback loops, and generative controls.

Key features of VSTGUI 4 include its support for scalable vector graphics (SVG), advanced animation capabilities, and high-performance custom view classes. Developers can create bespoke controls representing AI concepts—for instance, a ‘creativity’ knob that modulates temperature parameters in generative models or a waveform morphing visualizer that displays latent space navigation.

The library’s CView and CControl hierarchies allow for deep customization. When implementing AI-driven interfaces, efficient parameter mapping between the GUI and the audio processor is paramount to prevent UI thread blocking, which could introduce audible artifacts in Ableton Live sessions.

Ableton Live’s VST Integration Architecture

Ableton Live implements the VST standard with specific optimizations for low-latency performance and seamless integration with its unique Session and Arrangement views. The DAW supports both VST2 (with legacy bridging) and VST3 plugins, though the latter is strongly recommended for new AI developments due to its superior event handling and parameter system.

Developers must consider Live’s distinctive requirements: robust preset management through Live’s browser system, efficient undo/redo integration, and proper handling of transport synchronization for tempo-aware AI plugins. The SDK’s IMidiMapping and IXMLRepresentationController interfaces prove particularly valuable when creating plugins that respond intelligently to MIDI input or present structured parameter hierarchies to Ableton’s automation system.

The Rise of Artificial Intelligence in Audio Processing

Artificial intelligence has moved beyond experimental academic applications into production-grade audio tools. Neural networks now perform tasks ranging from intelligent denoising and source separation to generative composition and adaptive mastering. Models such as WaveNet, DDSP (Differentiable Digital Signal Processing), and various transformer architectures have demonstrated remarkable capabilities in modeling temporal audio dependencies.

For VST plugin developers, the challenge lies in adapting these typically computationally intensive models for real-time, low-latency inference. This is where the marriage of Steinberg VSTSDK with optimized inference engines becomes essential. Libraries like RTNeural, ONNX Runtime, and TensorFlow Lite for Microcontrollers are being integrated directly into VST processor classes to enable sub-10ms inference times on modern hardware.

Integrating AI Models with Steinberg VSTSDK

Successful integration begins with model selection and optimization. A typical pipeline involves training models in Python using PyTorch or TensorFlow, exporting them to ONNX format, and implementing a C++ inference engine within the VST3 processor. The process() method becomes the critical junction where audio buffers are preprocessed, fed through the neural network, and post-processed before output.

Consider a neural EQ plugin: the audio processor analyzes spectral content using FFT, feeds features into a recurrent neural network that predicts optimal filter coefficients, and applies these in the time domain. All of this must occur within the constraints of Ableton’s buffer size, typically 64-256 samples at 44.1kHz or 48kHz sample rates.

Memory management is crucial. AI models can be large; developers utilize model quantization (INT8 instead of FP32), pruning, and dynamic loading to maintain a small memory footprint. The VSTSDK’s setState() and getState() methods must be extended to serialize not only traditional parameters but also model hyperparameters and training context.

- Utilize Steinberg’s VST3 wrapper for JUCE to accelerate development while maintaining direct VSTSDK compatibility.

- Implement thread-safe communication between the audio processing thread and any background model retraining or analysis threads.

- Leverage SIMD instructions (AVX2, NEON) for accelerating both DSP and neural network operations.

- Profile extensively using Ableton’s performance meters and external tools like Intel VTune.

Advanced VSTGUI Techniques for AI Visualization

AI parameters often lack the intuitive physical analogs of traditional controls. VSTGUI enables developers to create novel visualizations such as latent space navigators, real-time t-SNE projections of audio features, or animated neural activation maps. These visual elements transform abstract AI concepts into engaging, manipulable interfaces.

Custom views can be implemented by subclassing CView and overriding draw() and onMouseDown() methods. For an AI timbre transfer plugin, a 2D latent space map might allow users to drag a cursor between different instrumental characteristics, with the underlying model interpolating in real-time.

Performance considerations remain paramount. All graphical operations must remain lightweight to prevent UI thread CPU spikes that could indirectly affect audio stability within Ableton Live.

Case Studies: AI-Enhanced VST Plugins in Ableton

Several pioneering plugins demonstrate the potential of this technological convergence. Neural DSP’s Archetype series, while not exclusively VSTSDK-based, showcases the commercial viability of AI-driven guitar tone modeling. Independent developers have released generative drum plugins that utilize recurrent networks to create evolving patterns that respond to Live’s transport and MIDI input.

One notable implementation involves a VST3 spectral processor that uses a convolutional autoencoder to decompose audio into transient, tonal, and noise components. Each component can then be individually processed using style transfer models before resynthesis. The VSTGUI interface presents this as three interlinked circular visualizers, allowing intuitive mixing of the AI-processed elements.

Performance Optimization and Real-Time Constraints

Real-time audio processing imposes strict deadlines. A single buffer overrun in Ableton Live results in audible glitches. AI inference must therefore be meticulously optimized. Techniques include model distillation, where a large network is trained to mimic a smaller, faster student network, and lookup tables for frequently computed activation functions.

Developers should implement adaptive processing that scales model complexity based on available CPU headroom, reported through the VSTSDK’s performance monitoring APIs. Multi-threading strategies, such as running non-critical AI analysis on background threads while maintaining deterministic processing on the audio thread, are essential skills for modern VST developers.

Challenges and Technical Solutions

Key challenges include:

- Latency accumulation from complex model inference

- Cross-platform consistency of AI behavior

- Plugin size bloat from embedding large model weights

- Ensuring numerical stability across different processor architectures

- Intellectual property concerns regarding training data

Solutions involve hybrid approaches combining traditional DSP with AI where appropriate, implementing robust fallback mechanisms, and utilizing Steinberg’s latest VST3 features for improved sidechain and auxiliary bus handling to create more sophisticated AI architectures.

The Future: AI-Native VST Development

As hardware acceleration for AI improves through dedicated NPUs in consumer processors, we can expect more ambitious VST plugins. Future developments may include plugins that continuously learn from a producer’s workflow, automatically generating personalized sound palettes, or collaborating in real-time with cloud-based foundation models while maintaining local inference for critical paths.

Steinberg continues to evolve both the VSTSDK and VSTGUI. The introduction of VST3.7 and potential future iterations will likely include enhanced support for metadata that better describes AI capabilities to host applications like Ableton Live.

Conclusion: Embracing the Intelligent Plugin Paradigm

The convergence of Steinberg VSTSDK, VSTGUI, and artificial intelligence represents a fundamental shift in how we conceptualize audio plugins. No longer mere effects or instruments, the next generation of Ableton plugins will function as creative collaborators, adaptive processors, and intelligent assistants. Developers who master these technologies will define the future of music production.

The technical journey requires proficiency in C++, digital signal processing, machine learning engineering, and GUI design. Yet the potential rewards—both creative and commercial—are substantial. As Ableton Live continues to dominate creative workflows, the plugins built with Steinberg’s tools will determine how far the boundaries of electronic music can be pushed in the AI era.

Ever-Evolving Landscape: Steinberg’s VSTSDK (Virtual Studio Technology Software Development Kit) and VSTGUI (VST Graphical User Interface)

In the ever-evolving landscape of digital audio production, plugin developers face the challenge of creating sophisticated, user-friendly tools that push the boundaries of sound design. Steinberg’s VSTSDK (Virtual Studio Technology Software Development Kit) and VSTGUI (VST Graphical User Interface) have long been cornerstones for building high-performance audio plugins. But as artificial intelligence (AI) permeates every facet of software development, the integration of AI into these frameworks heralds a new era for plugin creation. This article delves into how VSTSDK and VSTGUI, when augmented with AI, are shaping the future of making plugins—enabling smarter, more adaptive audio processing that responds to user needs in real-time.

From automated parameter optimization to generative sound design, AI’s role in VST plugin development is not just innovative; it’s transformative. We’ll explore the technical underpinnings, practical implementations, and forward-looking possibilities, providing developers with actionable insights to harness this synergy.

Understanding Steinberg’s VSTSDK

The VSTSDK is Steinberg’s comprehensive toolkit for developing VST plugins, compatible across major digital audio workstations (DAWs) like Cubase, Ableton Live, and Logic Pro. At its core, VSTSDK provides the foundational APIs for audio processing, MIDI handling, and plugin architecture, ensuring cross-platform compatibility on Windows, macOS, and Linux.

Key Components of VSTSDK

- Audio Processing Engine: Handles real-time signal processing with low-latency requirements, supporting formats like VST3 for advanced features such as sidechain processing and multiple I/O configurations.

- MIDI and Parameter Management: Enables dynamic control of plugin parameters via MIDI CC or automation, crucial for expressive music production.

- Host Communication: Facilitates seamless integration with DAW hosts through standardized interfaces, including editor and processor classes for modular design.

Technically, VSTSDK leverages C++ for performance-critical code, with bindings for other languages via extensions. Its modular structure allows developers to focus on core algorithms while relying on the SDK for boilerplate tasks like buffer management and thread safety.

Evolution and Versioning

The latest iterations, such as VST 3.7, introduce enhancements like improved graphics rendering and support for remote procedure calls (RPC), paving the way for distributed processing in cloud-based DAWs. This evolution is particularly relevant when integrating AI, as it provides hooks for machine learning models to interface with audio streams without compromising real-time performance.

Exploring VSTGUI: Building Intuitive Interfaces

VSTGUI complements VSTSDK by offering a robust framework for creating graphical user interfaces (GUIs) for plugins. Built on cross-platform graphics libraries like JUCE or native APIs, VSTGUI ensures that plugins look and feel native to their host environment while maintaining consistency across platforms.

Core Features of VSTGUI

- Customizable Controls: From knobs and sliders to complex waveforms and spectral displays, VSTGUI supports vector-based drawing for scalable, high-DPI interfaces.

- Event Handling: Manages user interactions with efficient event loops, integrating seamlessly with VSTSDK’s parameter system for real-time updates.

- Theming and Accessibility: Allows for dark/light mode support and keyboard navigation, enhancing usability for professional audio engineers.

Under the hood, VSTGUI uses a scene graph architecture, where UI elements are nodes that can be animated or scripted. This structure is ideal for AI-driven interfaces, where elements might dynamically adjust based on learned user behaviors or audio analysis.

Integration with VSTSDK

VSTGUI and VSTSDK are designed to work in tandem: the SDK handles the backend processing, while VSTGUI fronts the user experience. Developers typically subclass VSTGUI’s View class to create custom components that link directly to VST parameters, ensuring synchronized audio and visual feedback.

The Rise of AI in Plugin Development

AI is no longer a buzzword in audio; it’s a practical tool reshaping how plugins are built and used. Machine learning models, powered by frameworks like TensorFlow or PyTorch, can analyze audio in real-time, predict user preferences, and even generate novel effects.

AI Applications in Audio Plugins

- Intelligent Processing: AI-driven equalizers that auto-adjust curves based on genre detection or neural networks for noise reduction that adapt to varying source material.

- Generative Tools: Plugins using GANs (Generative Adversarial Networks) to create synthetic instruments or AI-assisted mixing that suggests balance adjustments.

- User Personalization: Recommendation engines within plugins that learn from session history to preset-load or parameter-tweak automatically.

From a technical standpoint, AI integration requires careful consideration of computational overhead. Edge AI—running models on-device—leverages optimized libraries like ONNX Runtime to maintain low latency, essential for VST plugins in live performance scenarios.

Integrating AI with VSTSDK and VSTGUI

Combining AI with VSTSDK and VSTGUI involves bridging the gap between audio processing pipelines and ML inference engines. Developers can embed AI models directly into the plugin’s processor class or use external services via APIs for heavier computations.

Technical Implementation Steps

- Model Preparation: Train or select pre-trained models (e.g., for audio classification using Librosa features) and export them to a plugin-compatible format like TensorFlow Lite.

- SDK Integration: In VSTSDK, extend the IAudioProcessor interface to include AI inference calls within the processReplacing() method, ensuring thread-safe model loading.

- GUI Enhancements: Use VSTGUI to visualize AI outputs—such as heatmaps for spectral analysis or progress bars for training feedback—via custom CView subclasses.

- Optimization: Employ quantization and pruning to reduce model size, and implement asynchronous processing to avoid audio glitches.

For example, a reverb plugin could use AI to analyze room acoustics from user-uploaded impulses, dynamically adjusting parameters. Code snippets in C++ might look like:

class AIReverbProcessor : public VST3::AudioEffect

{

void processReplacing(float** inputs, float** outputs, Vst::ProcessContext& context) {

// Run AI model on input buffer

auto features = extractAudioFeatures(inputs[0], numSamples);

auto predictions = aiModel->infer(features);

applyReverbWithAIParams(outputs, predictions);

}

};This pseudocode illustrates how AI inference slots into the real-time loop, with VSTGUI updating controls based on predictions.

Tools and Libraries

- Steinberg’s Extensions: Upcoming VSTSDK updates may include native AI hooks, but currently, integrate via third-party libs like FAUST for DSP-AI fusion.

- Cross-Platform AI: Use Core ML on macOS or DirectML on Windows for hardware acceleration.

Case Studies: AI-Powered VST Plugins

Real-world examples showcase the potential. iZotope’s Neutron uses AI for mix assistance, built on VST3 standards with custom GUI elements akin to VSTGUI. Similarly, open-source projects like the AI-enhanced delay plugin on GitHub demonstrate VSTSDK’s flexibility: an LSTM model predicts echo patterns, visualized through dynamic waveforms in the interface.

In one case, a developer integrated a diffusion model for vocal synthesis, achieving sub-10ms latency by offloading training to the cloud and inference to the plugin core. These implementations highlight reduced development time—up to 40%—thanks to AI automating tedious parameter tuning.

Challenges and Solutions in AI-VST Integration

Despite the promise, hurdles remain. Real-time constraints demand lightweight models; solutions include model distillation to shrink sizes without accuracy loss.

Common Pitfalls

- Latency Issues: Mitigate with GPU delegation where possible, or hybrid CPU/GPU pipelines.

- Compatibility: Ensure AI libs don’t conflict with VST’s strict ABI; use containers like Docker for testing.

- Ethical Concerns: Address bias in AI audio models through diverse training data, ensuring fair representation across genres.

Debugging tools like Steinberg’s VST Validator help verify plugin stability post-AI integration.

The Future: AI-Driven Plugin Ecosystems

Looking ahead, VSTSDK and VSTGUI will likely evolve into AI-native frameworks. Imagine plugins that self-optimize via reinforcement learning or collaborate in DAW sessions using federated AI. Steinberg’s roadmap hints at cloud-AI extensions, enabling plugins to tap into vast datasets for hyper-personalized soundscapes.

This future democratizes plugin development: non-experts could use no-code AI tools built on VSTGUI to prototype effects, while pros leverage advanced SDK features for bespoke creations. The synergy promises a renaissance in audio innovation, where plugins aren’t just tools—they’re intelligent collaborators.

Conclusion

Steinberg’s VSTSDK and VSTGUI, empowered by AI, are poised to redefine plugin development, blending technical precision with intelligent adaptability. Developers equipped with these tools can create plugins that anticipate user needs, streamline workflows, and unlock creative potentials previously unimaginable. As AI matures, embracing this integration isn’t optional—it’s the key to staying ahead in the competitive audio industry. Start experimenting today: download the latest VSTSDK, prototype an AI feature, and join the future of sound design.